There’s still a good chunk of time to go before AMD and Nvidia’s next generation cards are launched, and details remain scarce for the most part. But if there’s one thing I know, it’s that relative power consumption will increase. Both RTX 40 and RDNA 3 cards are set to push graphics card power consumption to even higher levels. While TDPs of 450W or more at the high end will get the pre-release attention, it’s looking increasingly like mid-range and low-end cards won’t be immune either.

Tom’s Hardware published an enlightening interview with Sam Naffziger, AMD’s Senior Vice President, Corporate Fellow, and Product Technology Architect. Naffziger made some interesting points. One of the key passages indicates that AMD believes it has a more power efficient architecture than Nvidia.

“Performance is king” said Naffziger, “but even if our designs are more power-efficient, that doesn’t mean you don’t push power levels up if the competition is doing the same thing. It’s just that they’ll have to push them a lot higher than we will.”

That last point is interesting. “It’s just that they’ll have to push them a lot higher than we will.” If we take this quote at face value, firstly, it all but confirms that RDNA 3 cards will come with increased total board power levels and secondly, AMD believes that Nvidia’s cards will come with even higher power consumption levels. It’s going to be interesting to see how both companies act, and react to one another’s designs.

Your next upgrade

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

If we recall AMDs claim of a greater than 50% performance per watt improvement (which is certainly an upper bound estimate or SKU specific one) then there’s the possibility, if an unlikely one, that a RX 7900 XT could end up twice as fast as a 6900 XT. Now of course that depends on how aggressive AMD wants to be. Power scaling becomes exponentially less effective the closer a chip gets to its frequency limits. Is it worth pushing 10 or 15 or 20% more power through a card to extract that last few percent of performance?

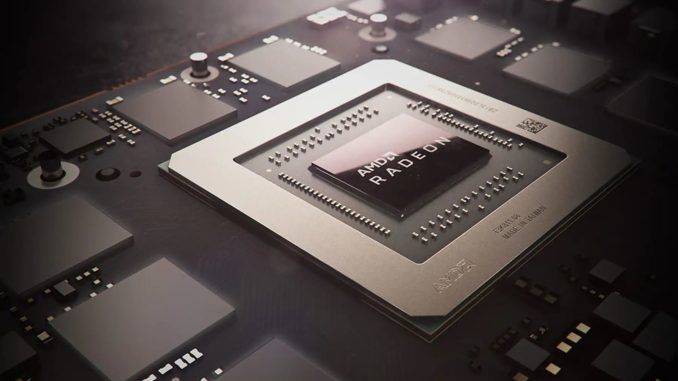

Oh, to be a fly on the wall in those internal meetings, There’s still so much we don’t know about RDNA 3. We know it will be a chiplet based architecture, chips will be manufactured with TSMC’s 5nm node and will feature optimized Infinity Cache, and probably more of it.

Coming back to power talk, the days of a 300W flagship appear to be over. The RTX 3090 Ti served as taste of what we can expect going forward. PCIe 5.0 power supplies with 16-pin power connectors that support up to 600W give as good a sign as any that GPU power consumption is only going to increase in the years ahead. Let’s take this quote from the Tom’s Hardware interview with Naffziger: “The demand for gaming and compute performance is, if anything, just accelerating… So the power levels are just going to keep going up.”

As the world grapples with complicated issues including energy supply, inflation and climate change, the trend towards ever higher GPU power consumption is not a good one. Of course, not all GPUs will feature ridiculous power levels. There will be demand for high-quality and affordable cards that can push good frame rates, though I hope that a performance at all costs mentality doesn’t forget to place at least some emphasis on power efficiency.

We’re going to get great performance from both AMD and Nvidia GPUs but there has to be a limit or a pushback from consumers. Where does it end? 600w, 800w? Performance is king, but lets not totally neglect everything else too.